Data rules everything in business today. However, it is only as useful as it is accessible. With so many ways to collect different types of data, unstructured data sets can quickly become more of a bane than an asset in an enterprise environment.

Because implementing training data for AI solutions can be so difficult and time-consuming, many organizations fail to pursue or deploy AI projects that could help them better utilize unstructured data. Studies show that data scientists spend 60% of their time simply collecting data and making it usable.

As a consequence, only half of large enterprises (i.e., those with more than 100,000 employees) have an established AI strategy, according to MIT Sloan Management Review. In addition, one-third of enterprises acknowledge that more than half of their data remains unused.

The challenges associated with training data are well documented, so what is holding organizations back from overcoming them? As it turns out, a simple change in approach can make a monumental difference. In this case, that approach is a hybrid approach to AI.

What is Training Data?

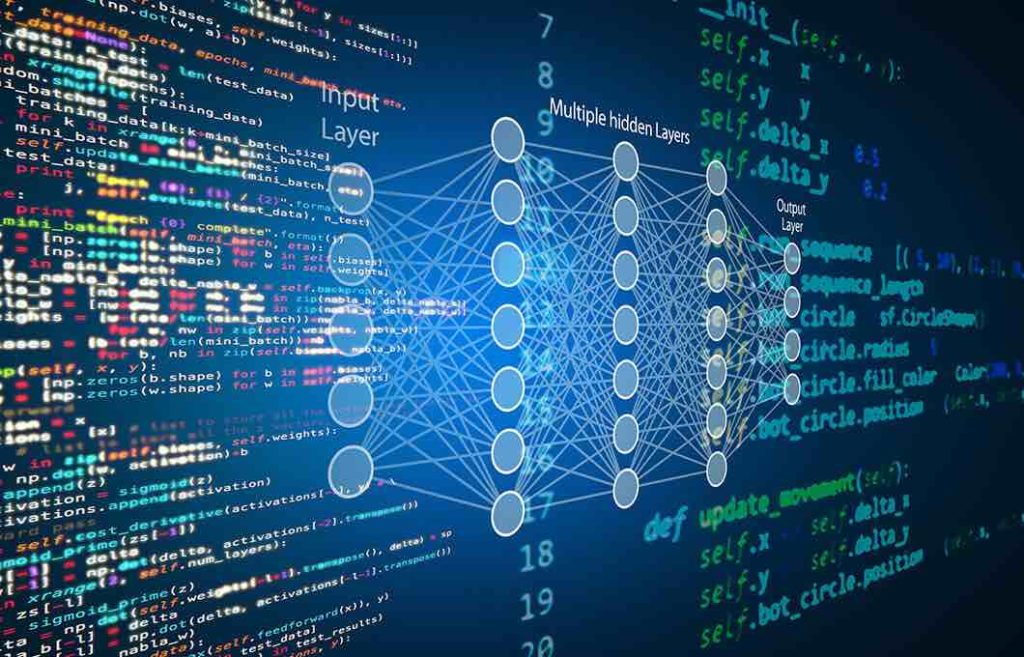

Let’s start from the top: what exactly is training data? Training data is the large dataset used to teach a machine learning model. It is essentially the Rosetta Stone for any machine learning model, in that it teaches the model the very foundation for which it will be used within an enterprise.

Why Does Training Data Matter?

Without training data, there is no model. An accurate machine learning model is built upon a comprehensive, high-quality training dataset. Building this data set is an imperative first step but represents a primary stumbling block on the way toward model deployment and project success.

MIT Sloan’s survey revealed the following about data scientists’ struggles with training data:

- 81% of data scientists admit that training AI with data is more difficult than they expected

- 76% attempt to label and annotate training data on their own to overcome the challenges

- 63% try to build their own labeling and annotation automation tech, adding significantly more time to the process without guaranteeing success.

Today, less than 4% of organizations report that training data presents no problems. This is due to factors that include bias or errors in data, scarcity of data, data in unusable formats and not having the tools or people to properly label data. This is a glaring concern as training data is the cornerstone of any machine learning model.

Despite the enterprise’s poor track record of building quality training sets, companies continue to follow the same tired approach to the process. This is not a recipe for success, as it puts organizations at a disadvantage from the outset.

How a Hybrid Approach Overcomes Training Data Problems

By leveraging a hybrid approach, organizations can assemble more reliable training sets that not only use smaller amounts of data but demand less time and resources for data preparation. The following details key areas in which a hybrid approach can impact your training data.

Accelerated Annotation

As we touched on before, many organizations experience problems with data quality. Training data requires thorough and detailed data annotation, which is extremely time-consuming and costly.

A hybrid approach can help you accelerate data exploration and annotation by exploiting a rule-based knowledge graph. With embedded human knowledge analyzing your data, you can automate the tagging of your data without sacrificing data quality. You simply validate or correct the results upon receipt.

The end result is considerably easier and faster data annotation. Not to mention it helps you improve upon the current first-time data pass rate that sits below 50%.

Model Scalability

Model scalability depends on the level of difficulty it takes to annotate data or set up knowledge bases via domain expertise. As most organizations lack talent with expertise in both the domain and ML, these processes can be expensive and time consuming.

Pure machine learning models cannot scale without pre-annotated data, and they inherently lack human knowledge — both general and domain-specific. Symbolic alone is not ideal either as it fails to scale in specific circumstances, such as high-volume tasks.

A hybrid approach brings symbolic and machine learning techniques together, requiring fewer rules and fewer annotations to achieve the same result. It also enables you to automatically generate symbolic rules, simplifying and accelerating annotation of training sets. This not only helps you jumpstart projects, but makes it easy to scale on the go.

Adaptability

Change is inevitable when it comes to data. However, not all AI models are built to withstand change, especially when it is unexpected. So while pure machine learning is sufficient for extracting keywords or phrases from text, information from outside the enterprise’s knowledge base is crucial for more complex queries.

Training data prepares a model for specific scenarios under specific parameters. Any changes to the status quo (e.g., new features, unknown entities, etc.) can lead to model drift if training data is not quickly amended.

Pure machine learning models are not always quick to identify changes, if they do at all. Plus, to retrain a model, you must reannotate your training which, as you know, is slow and tedious. On the other hand, a symbolic approach enables you to update rules at any time to address specific changes in data.

A hybrid approach is especially effective in this case as it pairs machine learning predictions with symbolic rules to more easily extract relational information. Thus, as you process training data, you can establish rules specific to sequences of data, adding valuable context to your model that reduces future model maintenance. This is all done while making your model more capable of extracting complex information.

Feature Engineering

Feature engineering is the process of using domain knowledge of your training data to transform raw data into features like input variables. It is a crucial (and often time-consuming) step to model development.

Just as a hybrid approach can help a model work better with relational information, it can leverage symbolic analysis on top of basic NLP capabilities to automatically leverage a much richer set of features. There is no need to feed different algorithms into specific sets of features (e.g., same number of words, capital words, punctuations, stop words, etc.). Instead, a hybrid approach takes a broader tact to support features like the most relevant concepts, word relationships and topics.

Hybrid also enables symbolic capabilities that navigate relationships between terms and concepts within the knowledge graph, integrating more flexibility and power into the model development. This all accelerates training by using fewer examples and less time while improving performance.

The business landscape today is more competitive than ever. While most organizations understand how AI can help them better manage and analyze data, the barriers to AI entry remain high. Training data is one of the biggest pain points, but a hybrid approach to NLP that leverages both machine learning and symbolic techniques can help you overcome this hurdle. Read more about how expert.ai can help your organization take full advantage of language data.